Claude Mythos is overhyped. Their "too dangerous to release" Marketing Strategy is Working - Ivan Patriki

Sam Altman's ChatGPT has been doing this for 7 Years... Now Dario Amodei's Claude is taking over.

Every serious investor knows this and is just playing along.

Question: What’s better for investors?

The tool that’s so good it might end the world? Or the company that doesn’t.

If you’ve been following the AI space for more than five minutes, you already know the answer. “Too dangerous to release” isn’t a safety posture.

For the record, I love Claude. I prefer it over OpenAI - but this wholeClaude Mythos marketing strategy has never been more obvious, even with their “safety board”.

Seven Years of the Same Headline

In 2019, OpenAI released GPT-2 and announced they were withholding it from the public because it was too powerful, too dangerous, too liable to flood the internet with “misinformation”.

The World Economic Forum covered it breathlessly. TechCrunch ran the headline.

Everyone was freaking out, while they were raising billions of dollars.

In 2021, a tool that had been “previously thought too dangerous” quietly dropped. The world didn’t end.

In 2023, GPT-4 shipped with formal warnings about potential misuse.

In 2024, their o1 model came with headlines about “self-preservation behaviors.”

In 2025, they’re admitting ChatGPT causes psychiatric harm and GPT-5 is being flagged as “high risk” for biological and chemical weapons.

Seven years. Same story. Different model number.

At some point, you have to ask yourself: are these companies genuinely worried about their technology? Or have has OpenAI discovered that fear is the most effective marketing tool in Silicon Valley?

.. And is Amodei starting to copy the strategy?

The Business Logic of Existential Dread

Think about what the “dangerous AI” narrative actually does for these companies:

It signals that their technology is powerful enough tmatter. It creates urgency and FOMO among investors, enterprise customers, and governments.

Enter Dario Amodei and the Anthropic Playbook

Dario Amodei worked at OpenAI. He watched them refine this strategy up close, then he left, founded Anthropic. To his credit, he ran the exact same play, but harder - and arguably built a better, safer product.

Anthropic’s brand identity is “we are the responsible ones.” They portray themselves as the “good guys” compared to the other money-hungry startups.

They were recently even blacklisted from the Pentagon because Claude refused to do mass surveillance & autonomous weapons,

The fact that they recently gave up hundreds of millions in military contracts gave gives Anthropic another reason to start looking for cash, and to triple-down on their newest model being the craziest model out there.

And their new “security council”, a body of “independent organizations” convened to evaluate whether this model is safe enough for the world.

Sounds serious. Let’s look at who’s on it.

The “Independent” Council That Isn’t

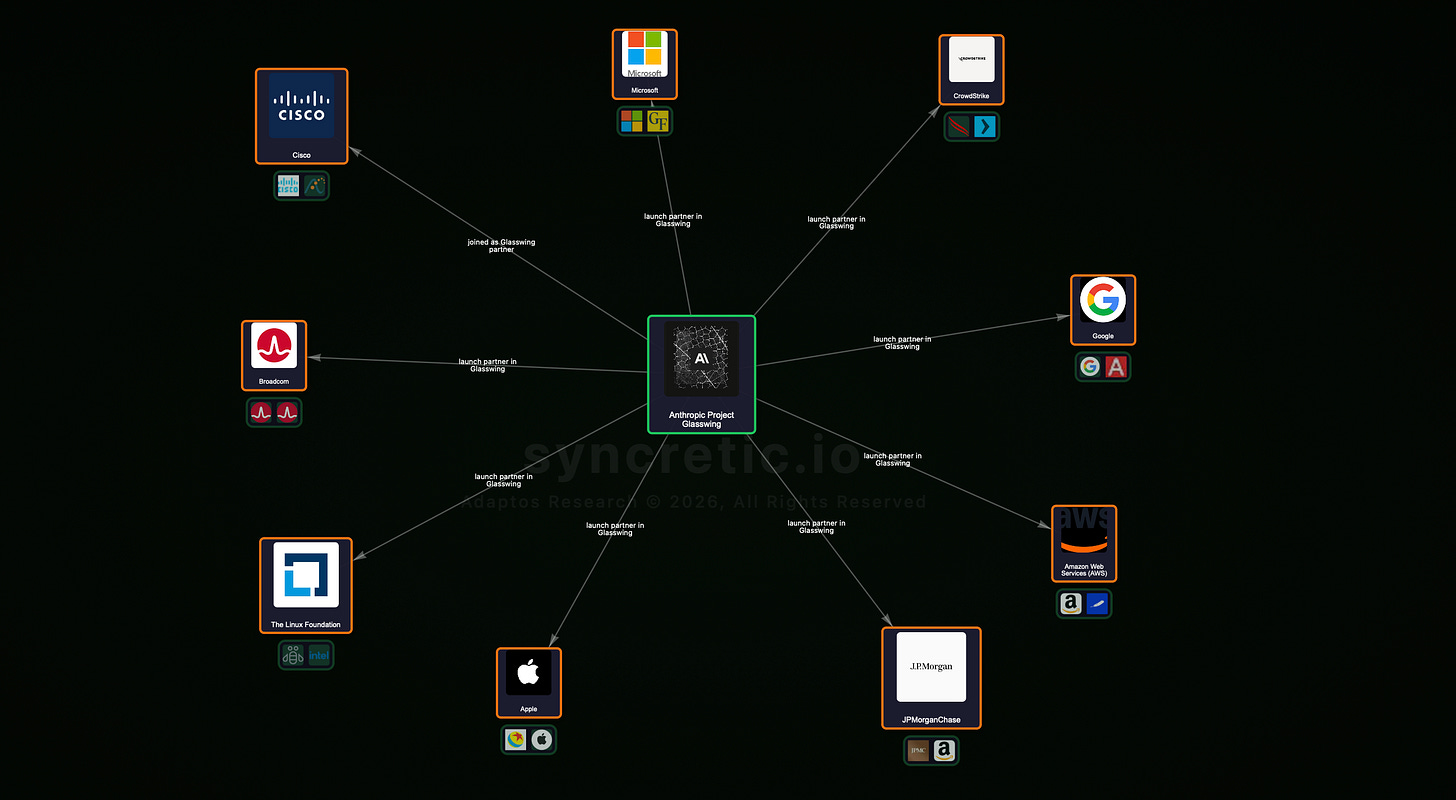

Here is the list of companies Anthropic has assembled as independent safety validators:

Amazon: One of Anthropic’s largest investors, with a multi-billion dollar stake and a cloud infrastructure partnership that ties their futures together.

Apple: Already paying Anthropic enormous sums to power AI features — Bloomberg’s Mark Gurman has reported on the scale of this relationship.

Broadcom: Anthropic has contracted them for tens of billions of dollars in custom chip development.

Cisco: A significant Anthropic investor.

CrowdStrike: Already on the Anthropic payroll for security services.

Google: One of the earliest and largest Anthropic investors, with a reported multi-billion dollar commitment.

JPMorgan Chase: Major investor.

The Linux Foundation: Anthropic earlier co-founded a dedicated fund directly with this organization the Agentic AI Foundation

Microsoft: Huge Investor.

NVIDIA: Huge Investor.

Palo Alto Networks: Their entire AI security stack already runs on Claude, they’ve been direcrtly partnered together for a long time.

Let me ask you something: if you were designing a truly independent safety council, would you stack it entirely with your own shareholders, chip suppliers, enterprise customers, and co-funding partners?

Of course not. Because that wouldn’t be a safety council. That would be a board meeting with better PR.

This isn’t a committee of neutral experts evaluating existential risk. It’s a coalition of financially motivated insiders who have every incentive to bless the product so it can ship, scale, and generate returns on their investments.

What This Is Actually About

Claude Mythos is not going to end the world. It is not going to trigger a nuclear exchange between India and Pakistan. It is not a superintelligent war machine.

It is a large language model with impressive capabilities and a very sophisticated marketing team.

The “security council” announcement exists for three reasons:

1: To eat OpenAI’s lunch in the press cycle. Every day spent covering Anthropic’s responsible safety theater is a day not spent covering GPT-5, Grok, or Meta’s Llama models.

2: To signal seriousness to regulators. Governments around the world are trying to figure out how to regulate AI. Companies that demonstrate “voluntary safety governance” have a massive head start in shaping whatever rules eventually emerge.

3: Money. To justify the valuation. “We’re building something so powerful we had to assemble a global coalition to make sure it doesn’t destroy civilization” is a compelling story.

The Part No One Wants to Say Out Loud

Influencers, mainstream media, podcast hosts actually benefit from this hype - it’s more interesting to say the world is ending.

Content creators gets clicks, investors benefit, and normal start blackpilling.

The more grounded read is simpler: these are powerful, useful, genuinely transformative tools being built by companies with normal commercial incentives.

You do not need to be afraid. You do need to be skeptical - especially of the people who profit most from your fear.

As an investor - keep this marketing hype in mind & don’t get too carried away.

W article